TOMSK, Apr 13 – RIA

Tomsk. New

technology to control autonomous vehicles is created by the Tomsk Elecard

company. It is a cloud, a kind of "taken-out brain" that can manage

objects remotely in real time. This, on the one hand, will make the roads of

the future safer, on the other – will reduce the energy costs of the robots

themselves. Details – in the material of RIA Tomsk.

How does autonomous

vehicles develop

There are two basic

concepts in the development of unmanned vehicles. One suggested by the

Japanese, who believe that it is necessary to build an active infrastructure.

That is, on every section of the road, on a sign, on every obstacle, there

should be active sensors which signal the car: "There is such a

restriction". But it's clear what's the costs of this ...

The second concept assumes

that the car itself can navigate in space, with the help of so-called technical

vision, based on sensor of video cameras. To do this, its built-in

"brain" (mobile processor) must be taught to recognize signs,

obstacles, pedestrians and other machines.

"And here arises the

question: either to train this particular mobile brain and constantly adapt it,

or give it the opportunity, firstly, to share the accumulated experience with

some external system (conditionally speaking, the "taken-out brain"),

and secondly, to take from this system recommendations for the movement",

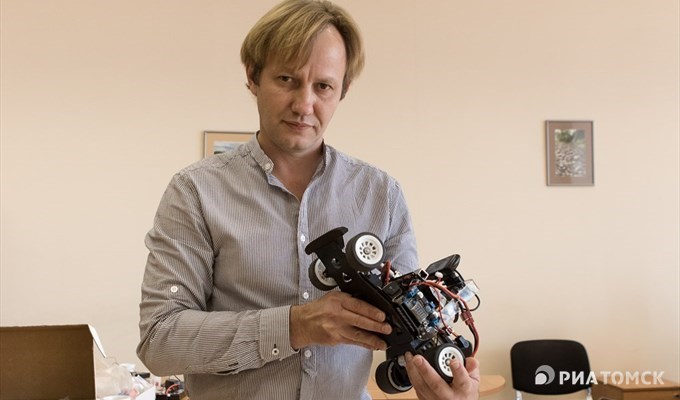

– says the Elecard nanoDevices director of development Victor Shirshin.

In the future, he says,

there will be hundreds and thousands of times more unmanned objects, and if to

add another third coordinate - when they start taking off - then the task of

safely "breaking through" this traffic can be too complicated for the

built-in "brain".

"That's why we had the

idea of an external moderator, and we discussed and implemented a partially

taken out algorithm of artificial intelligence. In essence it is a distributed

cloud of information, an artificial intelligence which can optimally organize

the logistics of the movement", – says Shirshin.

© РИА Томск. Павел Стефанский

Elecard begun to deal with systems of technical vision about three years ago. It is necessary not only for an autonomous vehicles, but for any self-moving object - anthropomorphic robot, garbage collector, etc.

Say, the drone is on the

road. The system determines that now another unmanned object will "come

up" on the right, and makes recommendations to change the speed or make a

maneuver. At the same time, the "mobile brain" on board does not

spend energy for its own calculations.

What technologies

are needed to make the "taken-out brain" work

The reaction time of an

ordinary driver to an abnormal situation – is about one second.

For a robotic system, this

is impermissible: it should react almost instantly. That is, the signal

"from the brain to the brain" should be transmitted with a minimum

delay, and for this, according to Shirshin, the next generation of

communication should appear - 5G, where this value is only one millisecond

(that is, one thousandth of a second).

"The introduction of

5G will allow us to manage the fast moving objects online. We expect that the

new standard will be adopted by about 2020", – he said.

The speed of data

processing must also change qualitatively, because thousands of millions of

self-moving objects will be connected to the remote control system, the behavior

of each one should be analyzed constantly.

"And here, too, there

are good news: at the end of March in San Jose, NVIDIA company (the leader in

computing for artificial intelligence) introduced a number of innovations that

will multiply the performance of computing boards and at the same time reduce

the energy costs of computing by 18 times. It is a revolutionary step to the

future!", – Shirshin emphasizes.

The vice rector for

innovative activity of Tomsk State University (TSU) Konstantin Belyakov confirms:

"5G technology will

radically change the interaction of self-moving, self-changing, self-conscious,

decision-making robots and Robots (with capital letter one write about robots

with the subject right to make their own decisions)".

He admits that at this

stage Russia is unlikely to bring anything substantial into the 5G theme

described by the 3GPP alliance: we do not have our own concept of developing

the fifth generation cellular equipment and, most likely, it is already useless

to catch up here. But to compete in technologies on the basis of this future

standard, we can:

"One can ask: why do

this, if it is possible to use it ready? I think it's simply vital - it's

important for us to avoid technological colonial dependence. We either go ahead

or at keep abreast with the whole world and work out the rules of the game

together, or after a while just obey the rules imposed on us", - Belyakov

said.

What is done for

this in Tomsk

The head of the Tomsk

cluster "Information Technologies and Electronics of the Tomsk

region" Igor Sokolovsky tells that a number of collectives works on

technical vision (synthetic vision system) in the region: RPC "Technique

of Business", "DiViLine", "Intec", "Software

Crystal", "Popkov Robotics" (enters the Elekard Group) companies

and also three scientific groups at TUSUR and one at TSU.

He believes that in the

narrow segments of technical vision this is the world level of developments:

"For example, the

"Technique of Business" develops face recognition systems based on

neural networks of deep learning, "Intec" - augmented reality for

technical vision. The laboratory of television devices of TUSUR deals with

stroboscopic vision systems that allow to see through the fog. Yakubov's

laboratory at TSU specializes in radio vision, developing radars for building a

3D map of space".

Victor Shirshin says that

the competence of all companies involved in systems based on technical vision,

can help in implementing the idea of a global "taken-out brain":

"It's not the main

problem to write software directly for artificial intelligence. It's much more

difficult to gather information on the basis of which this artificial

intelligence will be trained. At one time we tried to use machines with

technical vision for this purpose, which our company - "Popkov

Robotics" - did for educational robotics designers".

He explains:

"These machines are

distributed to a large number of users, each of them goes along symbolic roads

with different conditions (lighting, glare, obstacles), this experience can

accumulate and collect in one place. And this distributed database can be used

by every newly connected artificial intelligence. It's as if the child, having

barely been born, already received all the experience of ancestors, could walk,

talk, know the traffic rules".

Now, "Elecard"

scales this idea: it initiated the creation of the Alliance of companies of

technical vision, which includes developers of systems based on technical

vision. It has already joined 13 members, so far only from Russia (although

there is a plan to make it international). The alliance is the part of the

Tomsk cluster of SMART Technologies.

"Each company of the

alliance is developing in its narrow sector related to technical vision. Today

we have already developed a fairly wide range of developments. And as soon as

we will be able to consolidate them in one program space so that they easily

integrate with each other, each company entering the alliance, will receive the

competence of all members of the alliance", – Shirshin said.

In

Tomsk, a part of the cloud, where knowledge will be accumulated, was placed in

one of the Data Processing Centers of Tomsk State University. The same will

then appear in different parts of the country.

© РИА Томск. Павел Стефанский

Victor Shirshin:: "It is not a problem to connect robots to the cloud in the future. But people have to be persuaded to join the alliance, because everyone wants to be the first. But breakthrough technological issues must be solved in cooperation. On one’s own man can lose".